USERS FRONT AND CENTER: Through Soldier-centered design, end users are front and center in the development process. Rather than testing a prototype after design has been completed, this approach emphasizes early and frequent feedback from Soldiers throughout the process. (Image by Getty Images)

While sometimes theoretically at odds, Agile development and Soldier-centered design can be mutually beneficial, as shown in 12 lessons learned.

by Pam Savage-Knepshield, Ph.D., Lt. Col. Jason Carney, Maj. Brian Mawyer and Alan Lee

Over the last 10 years, the U.S. Army has worked to change the fundamental underpinnings of the acquisition process and shed its reputation for being slow, frustrating, complicated and expensive. The promise of Agile acquisition to enable responsive delivery of capabilities based on continuous user feedback (Soldier touch-point events) has become a reality. Agile development integrates design, development and testing into an iterative life cycle to deliver incremental releases of capability. Soldier-centered design places those who ultimately use the system squarely in the center of the design process ensuring their feedback and needs are the foremost consideration when making design trade-offs and decisions. At face value, it appears the two should dovetail nicely; both are philosophies, and both focus on iteration and inclusion of the end user. Despite their commonalities, we encountered several differences that played a significant role in their successful execution and integration. Overcoming these points of contention is the focus of this article.

BACKGROUND: THE SOFTWARE MODERNIZATION PROGRAMS

The Project Manager for Mission Command, within the Program Executive Office for Command, Control, Communications-Tactical (PEO C3T), integrated Agile and Soldier-centered design during two software modernization efforts—the Advanced Field Artillery Tactical Data System (AFATDS) 7.0 and Precision Fires Dismounted Block 2. AFATDS is the primary system used for planning, coordinating, controlling and executing fires and effects for field artillery weapon platforms and for Long-Range Precision Fires Cross-Functional Team initiatives, which are among the Army’s primary modernization lines of effort. Precision Fires Dismounted is used by forward observers on the frontline to transmit digital calls-for-fire and precise target coordinates to AFATDS for dynamic target prosecution. Although the software efforts for these programs vary along many dimensions such as size, complexity and team composition, we found our lessons learned applicable to both and extensible to non-Agile development efforts as well.

| SOLDIER-CENTERED FROM THE START

Soldier-centered design is so tightly woven into the fabric of the Precision Fires Dismounted program office that, when it came time to request authorization from the milestone-decision authority to proceed with limited deployment, it seemed only natural to invite Soldiers to the meeting. Forward observers from 1-320th Field Artillery Regiment, 2nd Brigade Combat Team, 101st Airborne Division (Air Assault) had participated in user juries and usability testing. The Program Executive Officer for Command, Control and Communications – Tactical Brig. Gen. Robert M. Collins, asked them to weigh-in on the go or no-go decision. They explained that, because the program office and developers listened to them and implemented the changes they requested, the system was much improved and they were looking forward to using it—“the earlier the better.” One Soldier claimed that, “it is 20 times better than the original PF-D.” Whether it is 20 times better is difficult to measure, however, usability test results have demonstrated a 40 percent increase in the ability of Soldiers to accomplish critical tasks on the first attempt–a measure of the intuitiveness of the user interface. A 29-point increase in its mean score on the industry-standard System Usability Scale brought the modernized system’s score 20 points above what is considered average and within the top 10 percent of scores for all commercial products tested. Products with scores this high are more likely to be recommended to a friend. Anecdotal evidence provided by one Soldier supporting this assertion was provided by one usability test participant who explained, “I was talking with some of the Special Ops JTACs [Joint Terminal Attack Controllers] I work with about this, and they thought it would be really cool to use because Call for Fire is something that we require them to do.” To expand on Kevin Costner’s famous line from “Field of Dreams,” If you build it right, they will come. The only way to build it right is to include Soldiers in the process. |

POINTS OF CONTENTION

Agile is typically a developer-led philosophy whereas Soldier-centered design is driven by human factors practitioners, human-systems integration analysts or user experience professionals. The Agile Scrum approach minimizes upfront planning in favor of producing code quickly, whereas Soldier-centered design maximizes up-front planning informed by user research (Soldier touch-point events) to produce a rough design that will evolve and crystallize through iterative user testing into a concrete final product. Agile measures progress by the amount of working software developed, while Soldier-centered design measures progress by how well users can achieve their goals using the system.

INCLUDE DEVELOPERS IN SOLDIER TOUCH POINTS: Samantha Mix, left, a Leidos User-Centered Design Team member, discusses user interface requirements with a Soldier at Fort Sill, Oklahoma. An early, accurate understanding of users’ goals and the context in which they will use a system is crucial for the design of an effective user interface. (Photos and illustrations by Dr. Pam Savage-Knepshield)

Just like other government program managers before us, we followed the Defense Acquisition Framework and integrated aspects of Agile development—sprints, releases, user stories, backlogs and burn down. We merged Agile development with Soldier-centered design to lay the foundation for system design—identifying the most frequent, critical and problematic tasks that require design emphasis and documenting user stories by observing them at work in the field and sitting with them as they demonstrated system use during normal operations. We also conducted online surveys, focus groups and participatory design sessions. When software releases became available, we began conducting usability tests to identify design issues and enhancements, which is where the focus remains for both programs, at present. Along this journey, we found some things worked well and some did not. Here, we focus on what worked well and what we will do differently in the future.

LESSONS LEARNED

The top 12 lessons learned during our efforts to integrate Soldier-centered design into Agile development include the following:

Build a strong design foundation with early Soldier-centered design activities.

Bringing human factors and human-systems integration onboard early to lead Soldier touch-point events builds a solid design foundation by validating user needs, documenting workflows, developing user stories and identifying what is working well and what is not, as well as uncovering feature sets requiring design emphasis and capability gaps existing in manual processes and legacy systems. With these essential building blocks, the Soldier-centered design team will create a rough design of the user interface that will evolve through iterative testing with users, often referred to as developmental operations (DevOps).

INTO AGILE DEVELOPMENT: The integrated design process reflects our lessons learned merging the two processes—identifying who best to lead specific design and development activities during each phase of the process as well as key deliverables that should influence or incorporate Soldier touch-point events.

Create multidisciplinary, user-focused, collaborative teams.

Assembling multidisciplinary, collaborative teams whose members—developers, designers, engineers, domain subject matter experts, logistics, training, safety, cyber and testers—attend at least one Soldier touch-point event enables each team member to approach design and development with the user’s context in mind. Most developers have never met a user and have no concept of the environments in which they work. The insights and empathy gained through immersion in a user’s experience will create a common bond within the team and inspire all to stay focused on users and their needs even under the crunch of rapid development cycles.

Ensure development of a user interface style guide for use by all agile teams.

A style guide contains a set of design guidelines that specifies visual presentation of content, interactive elements and how they behave (buttons, form fields, dialog boxes, menus, navigation, etc.).

Ideally, a style guide should include reusable code snippets for front-end design elements to increase adoption by separate small Agile teams and should be in place before the start of coding. Adhering to the guide will ensure a consistent look and feel across feature sets, enabling users to transfer knowledge gained from using one feature set to another. Users can focus on performing a task rather than having to learn how the system works every time they use another one of its features. During internal vendor testing, screen layouts and interactive elements should be compared against the style guide to ensure compliance. Doing so will reduce the backlog created by identification of design inconsistencies during usability testing.

DEVELOP A STYLE GUIDE: The items depicted are crucial components for inclusion in a user interface style guide. Style guides help ensure consistency across all elements of the user interface and provide source content for training and documentation developers.

Refine the design with user feedback early and often.

The Soldier-centered design team needs to work one step ahead of a sprint to collect user feedback, document the design and hand it off when development is ready to begin. They need to proactively test design assumptions and tackle designs ahead of the rest of the team. When using online surveys to gather quick feedback for alternate design implementations, users should perform representative tasks using the designs; grounding feedback in realistic scenarios will increase the validity of results. See Figure 3.

Take time to plan when to conduct usability tests; sprints do not provide an obvious point for inserting tests. Do not wait for perfect, clean code to reach out to users for feedback. Start early using paper prototypes, mockups, wireframes, simulations, stringing screens together in a slide presentation or even using emerging code. Neither the design nor the code needs to be complete to conduct part-task testing. Conduct smaller more frequent Soldier touch-point events.

REFINE THE DESIGN: A screen shot from an online survey designed to collect preference and performance data. Results determined which of two design alternatives should be implemented based on Soldier feedback.

Create user advisory panels.

Assemble a large user advisory panel and selectively invite members to provide feedback during Soldier touch-point events on a regular or ad hoc basis.

Identify design goals and usability metrics.

Identify design goals and establish performance and preference measures and metrics upfront to drive design. For an intuitive design, consider a target such as 85 percent of target users, who have completed advanced individual training for their military occupational specialty and will be able to accomplish critical tasks on the first attempt.

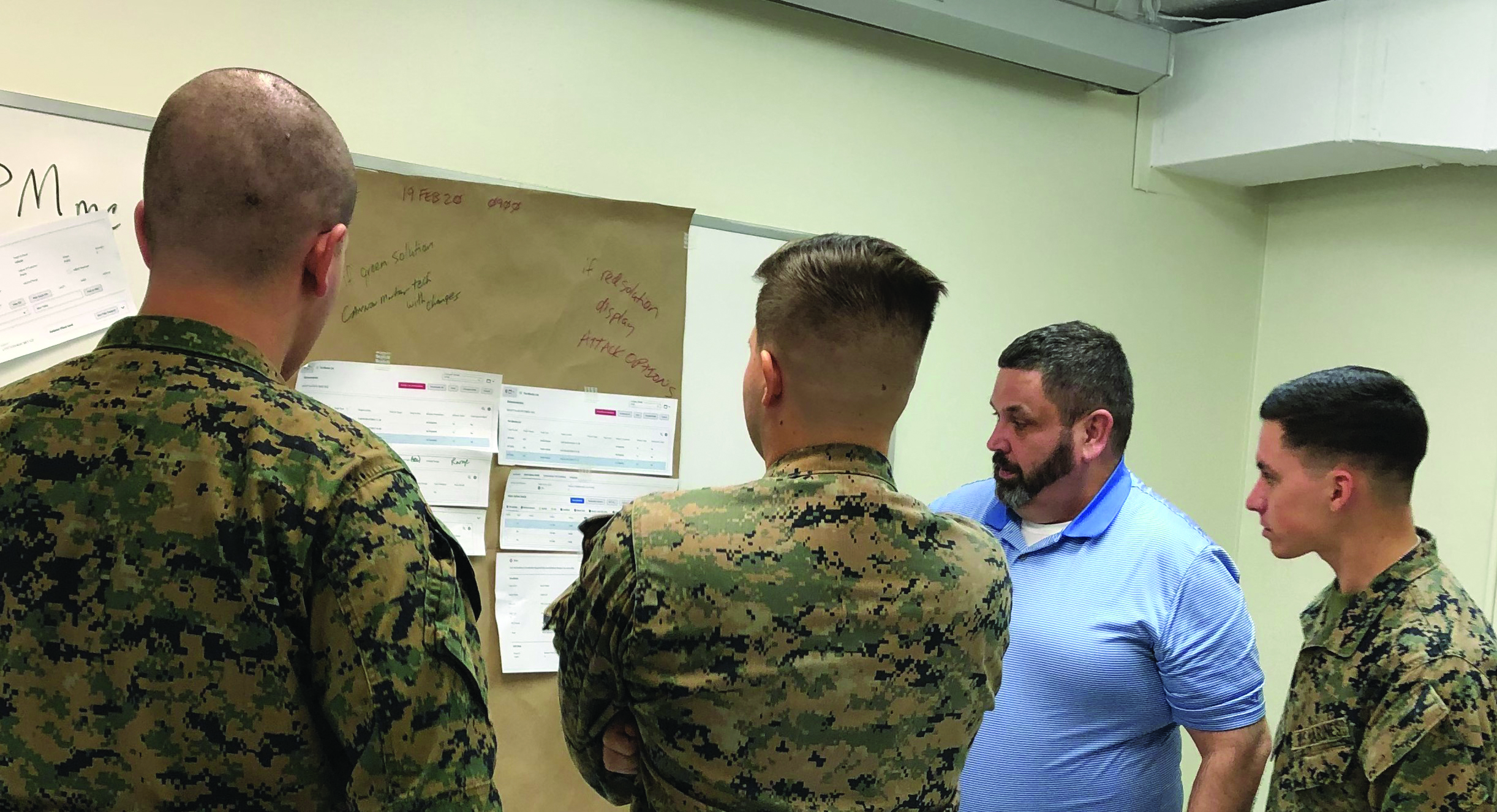

PARTICIPATORY DESIGN: Scott Sines, CACI International, Inc., moderates a participatory design session with Marines at Fort Sill, Oklahoma. During the session, Marines created paper prototypes of mocked up display screen content and task flows that they believed would support their ability to meet the “Five Requirements for Accurate Fire” and field artillery time standards for fire mission processing.

Standardize Soldier touch-point event procedures.

Standardize usability test procedures and data-collection materials, creating templates for reuse during subsequent Soldier touch-point events to save time and ensure consistent data collection. Face-to-face usability testing is generally preferred; however, conducting virtual tests using Microsoft Teams has proven to be a viable option.

The Soldier-centered design team will validate requirements during Soldier touch-point events. Take advantage of this data to eliminate irrelevant requirements while exercising caution to avoid costly feature creep.

Fuel innovation with user feedback.

When you put your users in the center of the design process, they will tell you what is working well and what is not. Delivering real-world, operational capability enhancements relies on one fundamental principal: listening to users. We did just that when legacy system AFATDS users described their issues with a tactical modem during early Soldier touch-point events and again later when Precision Fires Dismounted user frustration with the same modem drove usability metrics down to an unacceptable level.

We handed off the issue to Army engineers who developed a prototype and revised it in real-time based on user feedback. They focused on ease of use by designing a “smart” modem; one that communicates with the host application to either correct misconfigured settings or notify the user to take corrective actions when the system cannot. Not all feedback will result in innovation; however, if you are hearing consistently that something is problematic, it probably warrants further investigation.

THE FEEDBACK LOOP: Dr. Katy Badt-Frissora, left, a Leidos User-Centered Design Team member, facilitates a usability test session with one Soldier while another observes their interaction at Fort Riley, Kansas. For this test, Soldiers were not provided training prior to participation because testing was designed to gauge the intuitiveness of the system’s user interface.

Include the team and stakeholders in Soldier touch-point events.

Have team members and stakeholders participate in Soldier touch-point events as observers, facilitators and data collectors. This will increase buy-in for iterative design changes.

Ensure funding and resources are set aside for Soldier touch-point events.

None of these activities is without cost; ensure funds for travel and equipment have been included in cost projections and availability of personnel to participate in Soldier touch-point event has been appropriately considered in sprint planning.

Ensure Soldier-centered design changes are included in the configuration management process.

Army Regulation 602-2, “Human Systems Integration in the System Acquisition Process,” describes how to implement Defense Instruction 5000.02 by emphasizing front-end planning to enhance design, reduce life cycle ownership costs, improve safety and survivability, and optimize total system performance. Soldier-centered design ensures Soldier inclusion in the acquisition process. In the U.S. Army, human-systems integration analysts, who are typically human factors engineers or research psychologists, develop human-systems integration plans documenting the Soldier-centered design process and planned Soldier touch-point events. They also develop standardized usability measures and metrics and data collection instruments to measure and track progress.

Human-systems integration analysts draft an issues tracker, a companion document to the human-systems integration plan, to monitor the status of issue mitigation. Before major acquisition milestones, unresolved issues in the tracker are reported in an assessment to the milestone decision authority. Rather than using a spreadsheet to track issues, analysts can complement Agile development by leveraging the same tools used for configuration management.

The human-systems integration plan and issue tracker are not contractually binding documents. Formal contractual agreements should specify how issues will be categorized (defect, enhancement), prioritized (high, medium, low) and monitored during the configuration management process. Furthermore, incentives must be established to encourage a higher level of performance.

DEVELOP A COMPREHENSIVE HSI PLAN: The table of contents from the Precision Fires Dismounted Block 2 human-systems integration plan conveys the critical elements that should be included in the human-systems integration, or HSI, plan. For example, it should describe usability measures and metrics, issue severity descriptions, agreements made with the vendor to track and mitigate issues, Soldier touch-point events that are planned and sample questionnaires that will be used during Soldier touchpoint events to collect user feedback.

CONCLUSION

Although our lessons learned were derived from merging the two processes for software development, they are equally applicable to hardware products and platforms, as demonstrated by the middle tier acquisition rapid prototyping effort, the Integrated Visual Augmentation System. This project, which is developing a heads-up-display with a wide array of capabilities, used Soldier touch-point events to understand users’ needs during the rapid, iterative development and evaluation of prototype designs.

Our lessons learned are also extensible to traditional acquisition, phased product development, the waterfall process model and spiral system development. Whichever acquisition process or model is used to develop hardware or software and procure commercial off-the-shelf products, early and iterative Soldier feedback is critical for ensuring systems meet user expectations well before they are fielded. As we have learned, Soldiers’ needs are not static; they evolve in response to new threats, advances in technology and changes in force structure, doctrine, tactics, techniques and procedures. Including Soldiers throughout the process helps ensure your system’s design maintains relevance.

Agile and Soldier-centered design were conceived to improve upon traditional development processes. Although they share a common goal, historically the challenges and barriers to their successful integration have been difficult to overcome. However, by leveraging the strengths of each and understanding how teams can overcome inherent points of contention, the Army will be positioned to more quickly develop capabilities that users find useful and usable.

For more information about usability measures and metrics, conducting Soldier touch-point events, and Soldier-centered design contact Dr. Savage-Knepshield at pamela.a.savage-knepshield.civ@mail.mil.

PAM SAVAGE-KNEPSHIELD, PH.D., is a research psychologist in the U.S. Army Combat Capabilities Development Data and Analysis Center leading human-systems integration and Soldier-centered design for the product manager Fire Support Command and Control at PEO C3T. A former distinguished member of technical staff at Lucent Technologies/Bell Laboratories, she has a Ph.D. in cognitive psychology from Rutgers University, a B.A. in psychology from Monmouth University, and is a Fellow of the Human Factors and Ergonomics Society.

LT. COL. JASON CARNERY is the product manager for Fire Support Command and Control at PEO C3T. He received an M.A. from Webster University and a B.A. from the University of South Alabama as well as Boise State University. He is Level III certified in program management and Level II certified in test and evaluation, and is a member of the Army Acquisition Corps.

MAJ. BRIAN MAWYER is the assistant product manager for Fire Support Command and Control at PEO C3T. He holds an MBA and master’s in project management (MPM) from Western Carolina University, an M.S. in strategic communication from Troy University, an MBA from the University of Georgia, and is Level 1 certified in program management.

ALAN LEE is the assistant product manager for the Precision Fires Dismounted system. He holds an M.S. from Monmouth University in software engineering and a B.S. in electrical engineering from Brown University. He is Level III certified in engineering and is a member of the Army Acquisition Corps.

James Goon, deputy product manager for Mission Command Cyber, PEO C3T, contributed to this article.

Read the full article in the Spring 2021 issue of Army AL&T magazine.

Subscribe to Army AL&T – the premier source of Army acquisition news and information.

![]()