The COVID-19 pandemic forced U.S. Army Operational Test Command to innovate during a new system’s operational test.

by Maj. Graham L. Mullins

The proverb “Necessity is the mother of all invention” is an apt description of operational testing during the coronavirus pandemic.

The General Fund Business Systems replaces multiple legacy financial management systems that had been used for decades and integrates them across the functional business process areas of the Army. General Fund Business Systems – Sensitive Activities (GFEBS-SA) does all that and restricts the information by a user’s need to know. The GFEBS-SA initial operational test and evaluation was scheduled and planned in accordance with traditional enterprise systems testing procedures for over 18 months. The U.S. Army Operational Test Command team’s plan included scheduled travel to test unit locations to observe the system in operation. Although COVID-19 prevented in-person testing, the project manager (PM) still needed an assessment before the fielding-decision deadline.

THE PROBLEM

The operational test had been scheduled to be performed on a globally fielded live system. This meant the GFEBS-SA would be the system utilized by Army civilians to process sensitive financial activities starting in May 2020, regardless of whether testing had been completed. Without thorough testing, the PM shop would not have a way to ensure the software performed as intended. COVID-19 and the resulting quick DOD travel ban, however, made that impossible and forced postponement. The PM, in conjunction with Operational Test Command, had to find a way to complete the testing within constraints imposed by the timeline and COVID-19.

A SOLUTION

The team had no choice in the new normal of COVID-19. It had to find a way for testing to go on. The test team devised a method for distributed testing whereby test participants and data collectors were not on-site together at the test site, but distributed around the country. Testing this way increases the complexity and collaboration requirements for planning and executing the test; however, it simultaneously reduces the amount of travel, travel cost and physical interaction. Test teams regularly travel the country, working on-site with users of new equipment to put it through its paces. That ability to work side by side with users is useful and productive, but in a pandemic, it is also exceedingly dangerous. It was imperative that the Operational Test Command conducted this test because if the system did not work as intended, classified information would be vulnerable to collection.

The test team managed the operation from Fort Hood, Texas, and connected electronically to six of the units under test, via Secure Internet Protocol Router (SIPR) and Non-classified Internet Protocol Router (NIPR). A combination of technology and creative thinking enabled the test team to collect the required information to validate the program’s success or failure. The team used two different types of monitoring software during the test, Morae and Elastic Stack. Morae is screen capture software that allows analysts to review recorded interactions for completion. Elastic Stack is software that allows users to analyze and visualize data. During this test, Elastic Stack helped monitor the GFEBS-SA server and produce performance logs. This instrumentation was critical because it would make up for any information shortcomings in the manual data collection process.

WIDE DISPERSION: This map illustrates the geographic dispersion between test team headquarters and test site locations. Testing headquarters was in Arlington, Virginia, while additional test participants were located at Rome, New York; Indianapolis, Indiana; Charlottesville, Virginia; San Antonio, Texas; and Fort Gordon, Georgia. (Images courtesy of U.S. Army Operational Test Command)

Traditionally, data collectors would be physically located with the users to observe and review all the transactions. If an incident should arise as part of a traditional test, a data collector captures it in a test incident report. For the GFEBS-SA test, however, the test team had to rely on the users to capture all test incidents as part of this unique distributed-test method. This significant change to its normal data collection process allowed Operational Test Command to record the same data without having to travel and physically interact with the test units. Executing this test data collection from Fort Hood resulted in a significant travel cost savings.

CHALLENGES

The most arduous process in this new testing process was receiving approval to load Morae on the test unit’s computers. GFEBS-SA operates on the SIPR network because of the classified nature of the information it handles. The security and network professionals who control these networks were very apprehensive about loading new software onto the networks. This required extensive coordination between the Fort Huachuca, Arizona-based Electronic Proving Ground, the software owners, and the network professionals at the test unit sites. Because of the nature of the classified data and using screen capture software, intelligence and security representatives from the unit sites were required to be involved to facilitate redaction of classified material. This level of coordination was time intensive and presented numerous friction points throughout test execution. Each headquarters and functional proponent had a different list of requirements the test team had to satisfy before they were allowed to load the software. Additionally, because the inability to travel, the IT departments at the installations had to install the instrumentation software themselves.

Distributed testing requires the test team and player units to embrace an innovative shift in both test preparation and execution. The player unit must take a more active role in the overall process because they transform from being just operators to operators and data collectors. Not only does the player unit have to continue executing its job using the new software, it also must be prepared to fill out test forms as system issues arise. The player unit must be trained on how to fill out the forms properly and testers must foster open communication with the unit to ensure proper data collection and test execution.

To accomplish this, the test unit must allot more time for data-collection training in addition to new-equipment training, which prepares test units to operate and maintain the system under test. The testing units must also identify and empower a test lead for direct coordination with Operational Test Command. The test lead will act as the single point of contact to reduce the workload on individual test participants.

A valid test is predicated on the test team’s ability to capture, transmit and synthesize data. Distributed testing complicates these determinates by requiring more coordination and a longer lead time to execute. Moreover, due to the pandemic, the player unit office was minimally staffed and the test participants only came into the office as needed. This increased the time required to complete end-to-end data flow. To work around the test unit’s restrictions and work schedule, the test team had to send a NIPR email to the site leads requesting that each test participant come to the office to complete each task. The site leads would gain approval from their leadership and then the test participant could come into the office and check their SIPR email. These requests would have been easily forecast in a scripted test. However, for a test on a live system, it created significant lead-time issues.

Additionally, the test team had issues with the test participants completing demographic forms and user surveys. This issue was alleviated by tracking the form count and attributing it to each site lead during daily test update briefs. However, the problem was not fully solved until the team sanitized the forms of all classified data and transmitted them over NIPR. When planning GFEBS-SA, the Operational Test Command did not anticipate the complexity of these issues and the effect they would have on the test length. The test team recommendation was to lengthen the test up to 25 percent to account for the extended lead time.

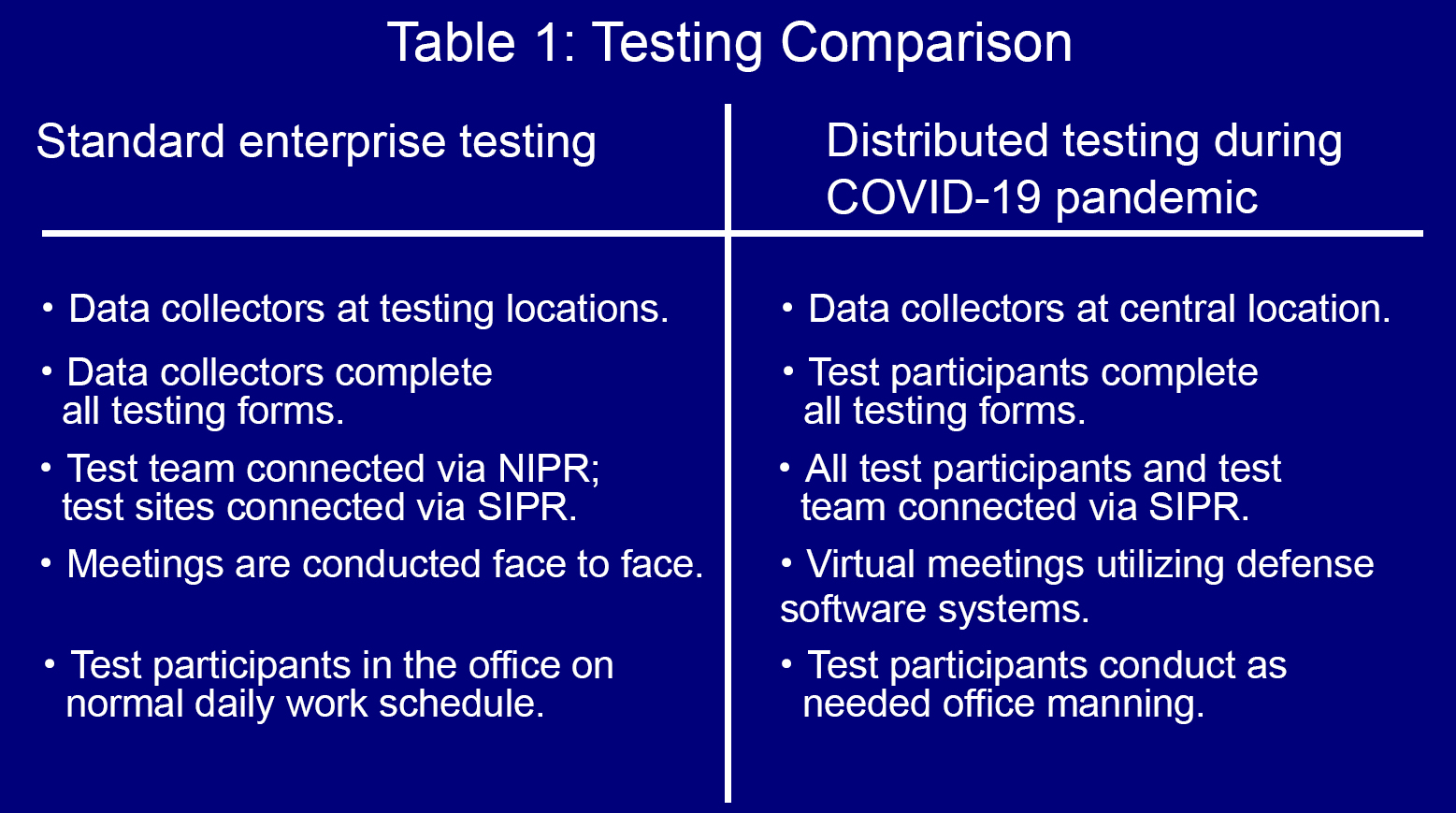

COMPARE AND CONTRAST: This table compares standard enterprise testing with the distributed testing that occurred during the COVID-19 pandemic.

NETWORK AND SOFTWARE RELIABILITY

During normal testing, the data collectors would be co-located with the test unit and there would be minimal reliance on network connectivity. However, when conducting distributed testing, the testers are wholly dependent on the network infrastructure at their Fort Hood-based test headquarters. During the GFEBS-SA test, several network outages resulted in testing delays. Relying solely on SIPR and NIPR connectivity created a single point of failure for test execution. During normal test execution, in the event of an outage, the team would default to paper records and then transfer them when the network outages were resolved. To overcome outages and maintain connectivity, the team used mobile Wi-Fi for unclassified communication. However, these wireless communication devices had limited utility because they were restricted to NIPR use only, while the test was on a classified network. In addition to the network, the test team was dependent on several defense software systems. However, these systems were not always operational and their unreliability resulted in testing delays.

For GFEBS-SA, the team relied heavily on instrumentation to detect, and record, the required business transaction volume to satisfy Army Evaluation Center requirements. Elastic Stack was able to capture all transactions processing across the GFEBS-SA server. This efficient data collection method resulted in capturing more than 80 percent of total transitional data for the test. Taken in conjunction with the more detailed task performance forms, the data managers were able to provide the Army Evaluation Center with an accurate end-to-end process with sufficient data volume to evaluate the program.

CONCLUSION

Maintaining flexibility is imperative to successfully accomplishing a test, no matter what impediments may arise, even those imposed by COVID-19. The robust distributed testing process facilitated the Operational Test Command team’s adaptability to best align resources with test objectives. The resulting shift to a distributed test ensured a safe environment for all those involved during the test. Distributed testing also provided the benefit of reduced cost for the taxpayer while meeting the necessary requirements to conduct a successful operational test.

In the future, if test teams want to use instrumentation on classified systems, they should hold weekly coordination meetings beginning six months before an operational test. This will ease concerns and allow things to proceed on schedule. Increasing the length of the test by up to 25 percent would account for potential network and systems downtime, and using secondary sources for capturing the data would ensure more than accurate data volume.

For more information, please contact Operational Test Command’s public affairs officer Michael Novogradac at michael.m.novogradac.civ@mail.mil, or go to https://www.eis.army.mil/.

MAJ. GRAHAM L. MULLINS is an acquisition officer (51A) currently serving as a test officer within the U.S. Army Operational Test Command, Fort Hood, Texas. He previously served as an assistant product manager within the Program Executive Office for Command Control Communications – Tactical, Aberdeen Proving Ground, Maryland. He holds an MBA from Vanderbilt University and a B.S. in mechanical engineering from North Carolina State University.

Read the full article in the Winter 2021 issue of Army AL&T magazine.

Subscribe to Army AL&T News – the premier online news source for the Army Acquisition Workforce. ![]() Subscribe

Subscribe