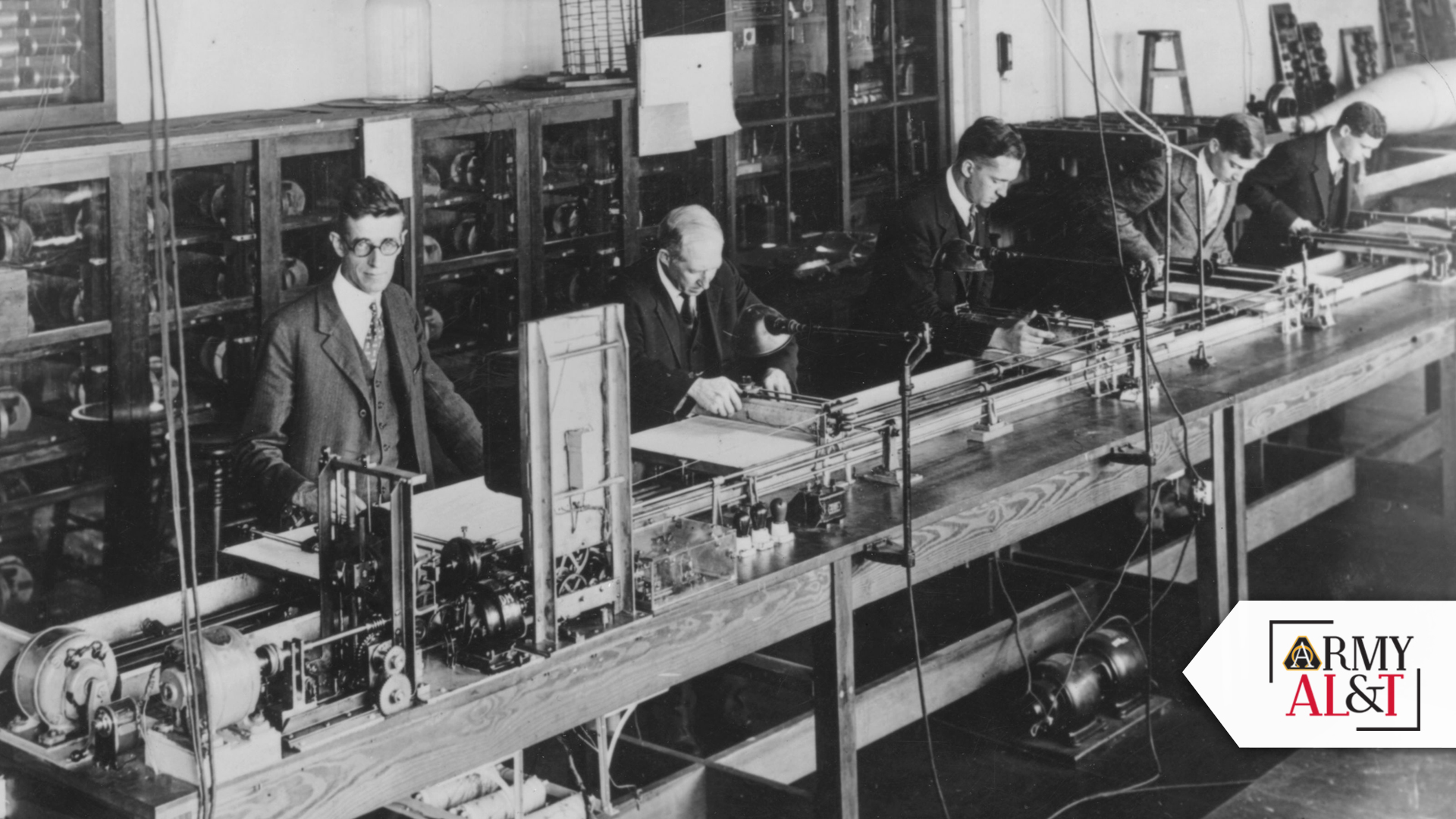

THE FIRST OF ITS KIND: Number Crunching circa 1935: U.S. electrical engineer and scientist Dr. Vannevar Bush (1890 – 1974) at the left, where an electrical brain solves mathematical problems far too complex for the human mind. (Photo by General Photographic Agency/Getty Images)

BEFORE SOFTWARE, THERE WERE COMPUTERS

The Army’s urgent wartime need created the computer industry in the mid-20th century. Can it pull another rabbit out of a hat to address today’s software acquisition challenges?

by Steve Stark

During World War II, the Army had an intractable problem that required a novel solution: New weapons that required firing tables were coming online, but the process for creating the tables was so laborious that the human brains that calculated them couldn’t keep up. From that need, the mid-20th century’s computer industry was born. Now the Army faces different challenges in software acquisition.

In “Gigantic Computer Industry Sired by Army’s World War Needs,” from the December 1963-January 1964 edition of Army Research and Development, the predecessor to Army AL&T, Daniel Marder and W. D. Dickinson, wrote that “Today’s multi-billion [sic] dollar computer industry …was spawned as a result of the U.S. Army’s urgent need for enormous amounts of firing tables and other ballistic data during World War II.” At the time, Dickinson was assistant to the director of the Army’s Ballistic Research Laboratories at Aberdeen Proving Ground, Maryland, part of the Army Research Office.

Weapons that lobbed munitions far beyond what a Soldier could see were difficult to aim. Firing tables were the solution. They consisted of calculations that considered the weight of the munition, the power of the propellant and the angle of the howitzer, for example, and told how to set the weapon before firing. Figuring all that meant a lot of calculations—for human computers.

INSERT DISK HERE: Saving work, the old fashioned way. (Getty Images)

SOFTWARE-FREE COMPUTERS

To relieve considerable backlogs, a group at the U.S. Army Ballistic Research Laboratories (BRL), Aberdeen Proving Ground, Maryland, led by the officer-in-charge of computations, Lt. Col. Paul N. Gillon, set out to “revolutionize methods of calculations.” BRL, “in addition to its own Bush Differential Analyzer [sic], had been using another ‘Bush machine’ at the University of Pennsylvania,” according to the article.

Vannevar Bush had invented the differential analyzer in the 1920s, and it was a mechanical computer that computed digits by means of mechanics similar to the way that adding machines and cash registers did. The computer was powered by electricity, but it was not electronic.

“It was here [at BRL] that the idea was originated by Dr. J. Mauchly for an entirely new type of computational machinery—a machine that would calculate by the lightning impulses of electron tubes.” Mauchly, with assistance of J. P. Eckert, Jr., “prepared the original outline of the technical concepts underlying electronic computer design.” They would use the Bush machine as a model and create an electronic prototype that could do the calculations not only faster but without the physical limitations of metal plates and gears that the mechanical computer used.

Mauchly and Eckert were “the scientists credited with the invention of the Electronic Numerical Integrator and Computer (ENIAC),” according to the Massachusetts Institute of Technology.

As important as software would be, no one gave a lot of thought to it. During World War II, the only sort of software that existed were literal soft wares—linens, clothing and the like—sold in the “software” sections of department stores. Even in the early editions of Army Research and Development, software either didn’t appear or was referred to as “so-called software.” It seemed incidental to the all-important computer itself.

ENIAC’s predecessor mechanical computers are important to understanding why computer hardware and software seemed inseparable. Such machines were sophisticated but narrowly purposed. The coding was in the hardware. To change the output, the user changed the settings of the hardware. ENIAC was modeled on that, as were the original mainframes. Those early electronic computers were in high demand and available by appointment only, and the users would bring their own software on punch cards or magnetic tape.

When it became clear what ENIAC and its successors could do with numbers, users quickly envisioned what else they might do.

STONE AGE COMPUTING: Antiquated computer complete with a single floppy disk drive. (Getty Images)

WATERFALL

In the 1960s, software as an engineering specialty was still in its infancy, and had only recently been designated “software engineering” to help give it the credibility it deserved alongside computer science. These days, the software-centered businesses of Google, Meta, Amazon, Netflix and Apple are worth trillions of dollars. All make some kind of hardware, but the magic and the money live in the software.

Once software became a fact of life, it almost immediately was in crisis. In the mid to late 1960s and for much of the next 20 years, the industry considered software bloated, buggy, over budget, dangerous, and there were insufficient software engineers to keep it on schedule.

In recent years, DOD stakeholders and reformers have lamented the “waterfall” method of software development, which, despite its advanced age, prevailed in the 1960s and still has not been retired.

The that led to the name—in a paper by Dr. Winston W. Royce called “Managing the Development of Large Software Systems”—was more a description of how things were done in 1970 and less a suggestion of how they should be done. The name appears to have come later, in a 1988 paper by Barry Boehm of the TRW Defense Systems Group, “A Spiral Model of Software Development and Enhancement.” Boehm wrote that the waterfall had improved upon the “stage-wise” (or stage-by-stage) method, but he sought to replace it with spiral development, “an iterative and risk-driven model of software development” that would fix waterfall’s shortcomings.

In some respects, the waterfall method matched the way that Congress funded (and still funds) DOD’s software acquisition: Create extensive requirements and develop everything with a particular end state in mind. Buy it all upfront. That works for trucks and tanks, but it doesn’t fit software as well as iterative development methods like DevSecOps and Agile, contemporary methods used by nearly all commercial developers, and which the Army would like to emulate.

EVOLVING TECHNOLOGY: Technology is ever changing. Years ago information was stored on one computer and a floppy disk was used to transfer data to another computer. Today, devices can interact, sync up and share data with multiple devices instantaneously. (Getty Images)

CONCLUSION

In the last few years as it has struggled with software acquisition, DOD promulgated different pathways for acquiring different kinds of systems. Software is one pathway in the Adaptive Acquisition Framework that intends to simplify and streamline acquisition.

Establishing that pathway didn’t suddenly make software acquisition better, but it is helping to change the DOD approach. According to reporting by the Government Accountability Office, “GAO’s ‘Agile Assessment Guide’ emphasizes the early and continuous delivery of working software to users, and industry recommends delivery as frequently as every 2 weeks for Agile programs. Yet, as of June 2021, only six of 36 weapon programs that reported using Agile also reported delivering software to users in less than 3 months.”

Big problems facing DOD today, GAO said, are “staffing challenges related to software development, such as difficulty hiring government and contractor staff.” Competition for talent is so intense that it’s been called a war. In 1945, the Army replaced hundreds of people doing the math for firing tables with a revolutionary, first-of-its-kind machine that spawned an entirely new industry.

Back then, the issue that the Army faced wasn’t a personnel problem. Indeed, the Army showed that it was a technological problem, and then solved it with an entirely new solution. Today’s “staffing challenges” also may not, in the end, have a personnel solution. Whether the solution will be technological only time will tell.

Solutions to challenges tend to create other, unforeseen challenges. Back in the 1940s, no one could have foreseen the lack of sufficient talent for the Army’s software needs because most people weren’t clear on what software was. Today, that model has flipped. Software is central and computers are commodities.

The Army isn’t likely to find another magical rabbit in a hat that will make all its software acquisition woes dissolve. Instead, the solution will come—as it did then—when smart people look to recreate tools and processes that make available talent sufficient to the task. Colorless money—funding not lashed to research, development, test and evaluation, for example—has its proponents, and may be part of the solution, but only a part. Whatever the ultimate solution, it seems inevitable that it will pose new challenges.

For more information on the issues facing Army acquisition—yesterday and today—go to https://asc.army.mil/web/magazine/alt-magazine-archive/.

STEVE STARK is senior editor of Army AL&T. He holds an M.A. in creative writing from Hollins University and a B.A. in English from George Mason University. In addition to more than two decades of editing and writing about the military, science and technology, he is, as Stephen Stark, the best-selling ghostwriter of several consumer-health oriented books and an award-winning novelist.